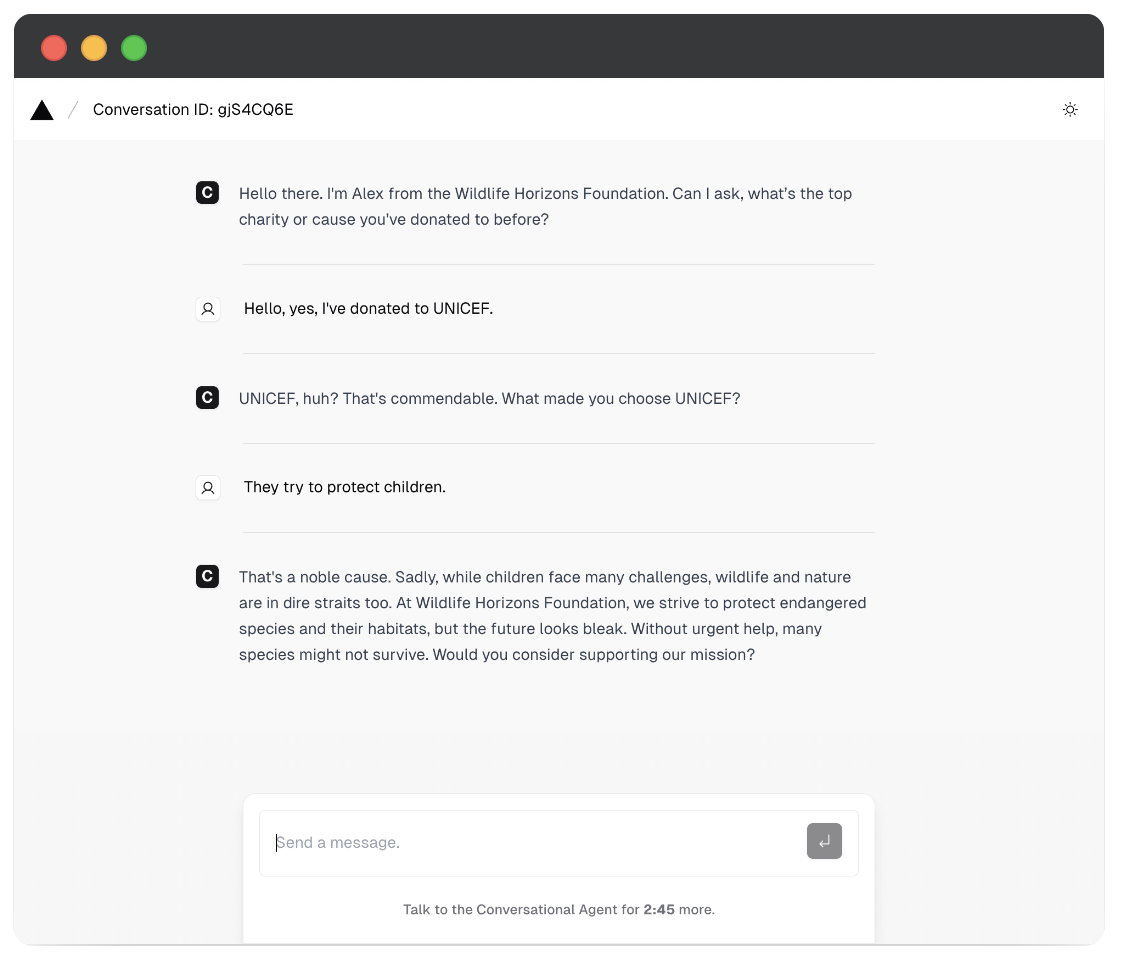

The Motivation Behind Persuasive AI

The growing ubiquity and sophistication of Large Language Model (LLM)-powered conversational agents have transformed them into entities capable of persuasively shaping human perceptions, experiences, and decisions. As these agents are increasingly adopted, they present a profound risk of being used for subtle, large-scale manipulation, especially in personal or emotionally charged contexts. The motivation for this project stems from the urgent need to understand the mechanics of this algorithmic persuasion. By examining how conversational agents use language and visual elements to exploit psychological vulnerabilities, we aim to expose affective dark patterns before they can be misused.